Sec. Chao’s Self-Driving Gaffe is a Lesson, Not a Failure

May 11, 2017

For all of the hype generated around self-driving cars, there is an equal amount of confusion about the technology and its implications for drivers – even, as it turns out, for the nation’s highest-ranking transportation official.

In an interview last week, Transportation Secretary Elaine Chao told Fox Business that some cars on the road today can drive themselves without human monitoring or intervention. Based on existing technology, she said, it would be safer for humans to have partial control rather than ceding it all to automated systems.

“We have now self-driving cars. We have level-two self-driving cars. They can drive on the highway, follow the white lines on the highway, and there’s really no need for any person to be seated and controlling any of the instruments,” the secretary said.

To be absolutely clear: this is not true. Fully self-driving cars that do not require human intervention are still years – if not decades – away from reaching the market.

Some cars on the road today are equipped with partially automated features like lane centering, automatic emergency braking to prevent collisions, and limited driver assistance systems (e.g. Tesla’s Autopilot, Audi’s Stop&Go traffic jam assist). But these features still do not make cars fully capable of driving themselves in all, or even most, situations.

Automated systems on the market today require a human behind the wheel to monitor the road and take over control in case of an emergency or system failure. Tesla Autopilot, for example, instruct drivers to keep their hands on the steering wheel and eyes on the road – and issue audible and visual alerts when they fail to do so.

But confusion about the capabilities of automated vehicle systems is not uncommon – and perhaps not even unwarranted.

Discussions of self-driving vehicles have largely focused on the end goals: saving the 35,000-40,000 lives lost to traffic incidents every year, making mobility more affordable and accessible, and reducing congestion caused by human error.

In light of this, Sec. Chao’s misconception should not be accepted as either a gaffe or a lack of understanding. Instead, this should serve as a lesson for industry leaders and policymakers who have more closely resembled two ships passing in the night.

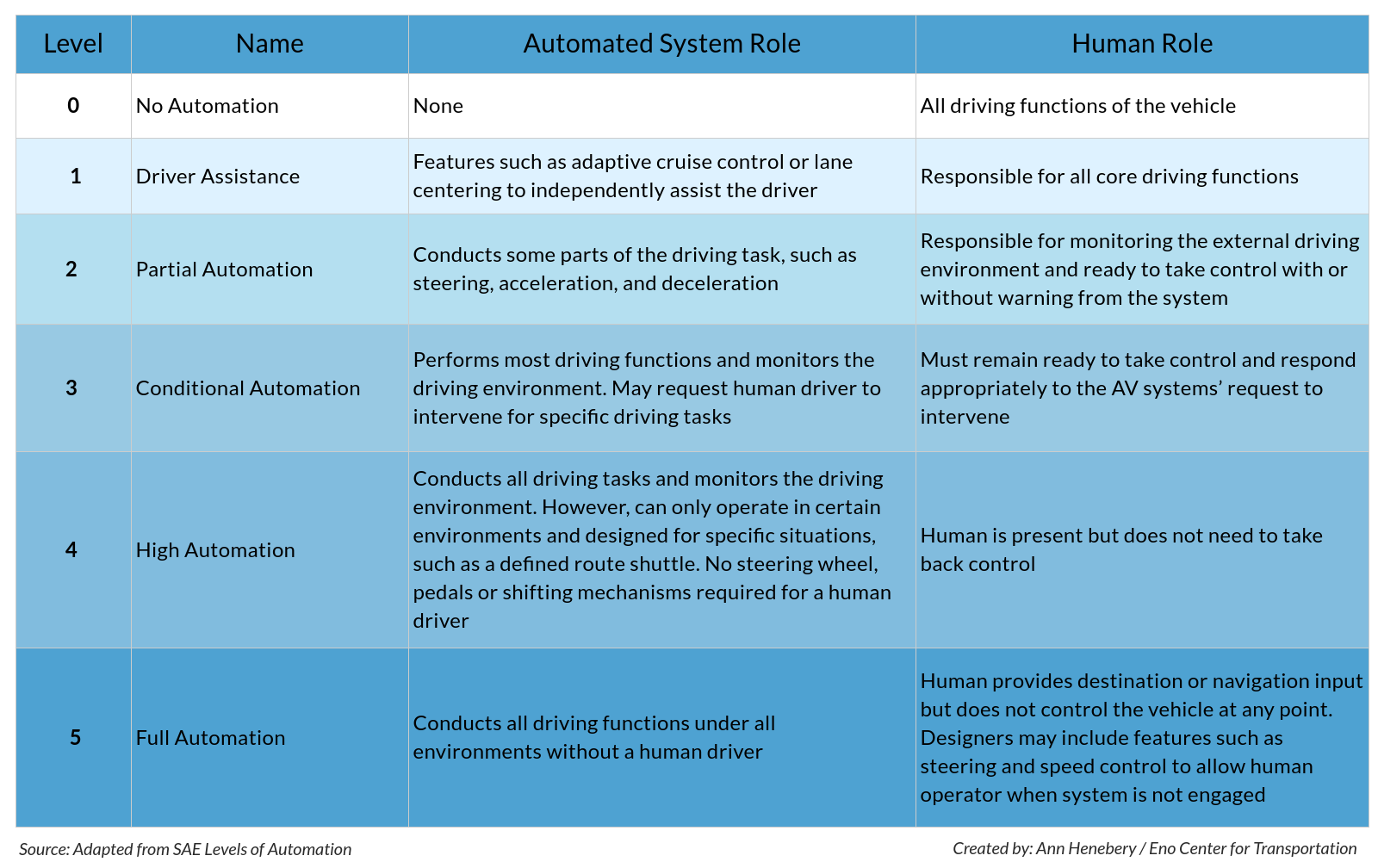

Chao took the important first step of trying to understand the levels of vehicle automation. These levels, as outlined by the Society of Automotive Engineers International (SAE), provide a foundation for concrete discussions about the precise capabilities and limitations of AVs as automated features are progressively integrated.

The levels of automation range from level 0 to 5, on a scale from no automation to full automation. As more functions of the vehicle are automated, the roles of the human and automated system for driving begin to switch.

Under this classification system, Tesla vehicles equipped with Autopilot have level 2 (or partially automated) capabilities because the automated system conducts part of the driving task, but the human behind the wheel is still responsible for monitoring the road and must be prepared to take over control immediately if the system encounters is a situation that it cannot handle safely.

Secretary Chao is right in that some level 2 AVs are mostly capable of driving on highways today without incident. But this has led to a false sense of security, as demonstrated in numerous YouTube videos of Tesla owners allowing themselves to be distracted, napping, or even playing Jenga and Checkers. Level 2 systems have very limited parameters in which they can operate safely, such as driving on a highway in sunny conditions.

This is not to say that level 2 is inherently unsafe. To the contrary, a National Highway and Traffic Safety Administration (NHTSA) report regarding the only known fatality under level 2 automation demonstrated that, while both the human driver and automated system did fail to prevent the collision, Autopilot has reduced the Tesla crash rate by 40 percent.

Automated systems at level 3 or higher are not yet on the road, but many AV developers plan to introduce them in the coming years – and they will ultimately be safer than human drivers. When they do, it will be important for the public to clearly understand their capabilities and limitations, else we risk senseless tragedies caused by misunderstandings.

Or, even worse, fail to reduce traffic fatalities by miscommunicating the potential of AVs and, inadvertently, inciting public concern about increased automation. This was the case with Sec. Chao last week when she stated, “I think the public is concerned about the safety… there are different levels of technology, and they’re being worked out. So at a level 2, that’s probably safer than a level 5 or a level 4 self-driving car… there’s a lot of experimentation going on.”

While the incredible potential of AVs is exciting, nuance must not be sacrificed for the sake of hype. Consumers must be convinced that the technology is being developed safely and will not endanger others on the road – and this all begins with leadership from government officials and industry leaders.

For this reason, the Eno Center for Transportation recently released a report, Beyond Speculation: Automated Vehicles and Public Policy, to help policymakers understand AVs and enact sound regulations to safely guide them from testing to deployment.

The secretary said it herself: “We can’t, as regulators, do a good job if there is a conflict between the galloping rate of technology and the consumer, passenger acceptance as to what is possible.”